Screens Accuracy Evaluation Report

Executive Summary

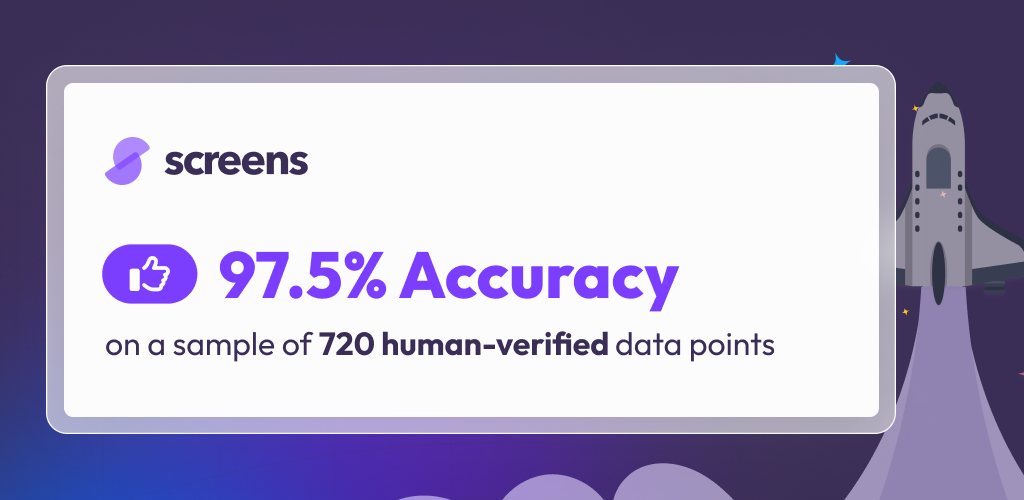

Evaluating the accuracy of large language models (LLMs) on contract review tasks is critical to understanding reliability in the field. However, objectivity is a challenge when evaluating long form, free text responses to prompts. We present an evaluation methodology that measures an LLM system’s ability to classify a contract as meeting or not meeting sets of substantive, well-defined standards. This approach serves as a foundational step for various use cases, including playbook execution, workflow routing, negotiation, redlining, summarization, due diligence, and more. We find that the Screens product, which employs this system, achieves a 97.5% accuracy rate. Additionally, we explore how different LLMs and methods impact AI accuracy.

Note: the full report is below and you can also read coverage of the report on Artificial Lawyer's article Screens Publicly Announces 97.5% GenAI Accuracy in Transparency Move.

Introduction

Screens is an LLM-powered contract review and redlining tool that runs in a web application and directly in Microsoft Word. Our customers use Screens to review and redline individual contracts or to analyze large sets of contracts in bulk. The core abstraction that enables this is what we call a screen. A screen contains any number of standards. Standards are criteria that are either met or not met in any given contract. Our users draft and perfect standards to test contracts for alignment with their team or client’s preferences, risk tolerance, negotiating power, and perspectives on contract issues.

Here is an example of a common standard:

The vendor must indemnify the customer for third-party IP infringement.

Examples of more complex standards are included under Example Standards below.

The Screens platform enables users to test for standard compliance in contracts automatically and at scale using retrieval augmented generation (RAG) powered by commercial LLMs. They can review these results in several different experiences throughout the platform and within the Microsoft Word add-in. This is all orchestrated by our proprietary scaffolding. This includes:

- Advanced domain-specific RAG techniques powered by internal research and development

- Validation and standard tuning workflows built into the web application that enable users to customize and optimize their standards

- The Screens Community – a growing set of curated screens containing standards drafted by experts in the given contract domain

- Contract ingestion and parsing across common document types:

- PDFs including enterprise quality OCR

- Microsoft Word documents

- Publicly hosted contracts (terms of service and privacy policies)

We’ve put this report together to showcase the accuracy of the system in what we think is a fair and transparent way. We’ve labeled data and curated training and test sets, while taking care to mitigate data leakage between the two. We’ve benchmarked the system using a few popular commercial LLMs. We’ve collected results with and without various aspects of our production system that we use to improve results. Our goal is to pull back the curtain and offer transparency into how we think about building and evaluating LLM-powered contract review tools.

Methodology

Our evaluation methodology is underpinned by the structure of our application and the specific type of problem that we are interested in solving with LLMs: determining if substantive legal standards are met in a contract.

There are many applications of contract review that are downstream of deciding if a standard is met: redlining, negotiation, summarization, due diligence, competitive benchmarking, deviation analysis, risk scoring, and more. However, we take the task of this binary decision as a starting point for evaluation. We do this for multiple reasons:

- It sits at the foundation of our product so it’s important for us to get right. We find that all of the use cases mentioned above get easier when you first correctly predict which of a set of well-defined standards are met in a contract. If this goes poorly, errors will compound. If it goes well, downstream use cases like redlining and negotiation get easier.

- It is easy to evaluate: we can label data sets with the correct answers, deploy an LLM-based system against that data set, and calculate traditional machine learning validation metrics like accuracy, precision, recall, and F1. We avoid the subjectivity associated with evaluating a redlining or a negotiating exercise. There we see disagreement among well intentioned experts and stylistic opinions independent of the substantive concern.

Artificial Lawyer discusses “the mess we are in” with regards to the evaluation of the accuracy of legal AI tools. There are multiple use cases, each with different stakes and accuracy requirements. The prevalence of hallucinations varies depending on whether you are conducting case law research, reviewing contracts, drafting contracts, or some other legal task. A comprehensive evaluation of legal AI tools is outside the scope of this report. We aim to contribute to the conversation within our specific area of interest.

Problem Setup

This can be expressed as a binary classification problem. Standards either pass or fail for a given contract, and they can be labeled as such to construct two data sets: a training set and a test set. The training set is used to iterate on each standard to optimize its performance. The test set is used to evaluate accuracy. The test set consists of contracts that were not seen during the standard optimization phase. This is to ensure that the standards are not overfit to the contracts in the training set.

Data Labeling

The training and test sets were labeled by multiple in-house contract reviewers with expertise in the subject matter of the given contract types and experience with prompt engineering. They’ve labeled contracts as either passing or failing each standard by reviewing the contracts in full.

Dataset Composition

The test set consists of a mix of various contracts, contract types, standard types, and standard difficulty. By the numbers:

- 720 applications of a pass/fail decision

- 51 contracts

- 132 unique standards

It spans the following contract types:

- Master Service Agreement

- Software Terms of Service

- Data Processing Agreement

- Non-disclosure Agreement

- Privacy Policy

Tuning the Standards

The standards used in this analysis were tuned by our internal reviewers using the training set. They used the Screens platform’s built-in validation functionality to iteratively tune the language used, the conditions and exclusions outlined, how to handle corner cases, how to handle the absence of relevant text, etc. The training set contains 1,202 applications of pass/fail decisions across 76 contracts. This brings the size of the combined dataset to 1,922 records across 127 contracts.

The results of this report are for the test set. This allows us to isolate the analysis, focusing on the system’s generalizability beyond contracts seen during the standard tuning phase. When we perform an evaluation run on the training set, we see similar results. This implies that the standard tuning phase generalizes well to unseen contracts and is therefore not an exercise in overfitting.

Example Standards

While many of the standards used are in publicly available community screens, we’ve listed some here for convenience. This offers a sense of the range of standards used:

The vendor must not completely disclaim all liability. Some examples of complete disclaimers of all liability include (a) requiring the customer to waive all direct damages, and (b) capping the vendor's liability at $0 with no exceptions to the cap. If there are any exceptions to what would otherwise be a complete disclaimer of all liability (e.g., if there is a complete disclaimer of all liability, but there are one or more carve outs), this would not fail.

The agreement should not permit an assignment without the other party's consent. If the agreement allows either party to assign, in any case, consent of the other party should be required. If the agreement does not address assignment, the agreement should pass. If the agreement allows a party to assign without consent in the case of a merger, acquisition, reorganization, consolidation, successor in interest, or similar, this should fail.

The contract should require that a party seeking indemnification make certain procedural commitments regarding the defense and settlement of a claim. Commitments include: (a) providing prompt notice of a claim; (b) surrendering the exclusive control of the investigation, defense, and settlement of a claim; AND (c) providing reasonable cooperation with the defense of a claim.

Standards often go beyond basic data extraction and clause identification, requiring substantive legal reasoning and potential cross references across multiple touch points in the contract.

The LLM’s Task

The meta-prompt tasks the LLM to respond with multiple components to its answer. It is given a general description of the problem setup, the standard, and text from the contract (using some variation of our proprietary RAG system to identify the relevant text). The LLM must respond with:

- A definitive yes or no answer as to whether the contract meets the standard

- An explanation as to how it arrived at this answer

- The sentence in the contract that best proves the veracity of the answer (or lack thereof if the standard is not addressed)

This is packaged up into a proprietary multi-hop chain-of-thought prompting (CoT) and retrieval system.

Caveats

We only evaluate whether the LLM provides the correct pass or fail response. We do not evaluate whether its stated reasoning or the sentence that it cites is accurate. The latter two tasks are subjective. While this is a limitation of this report, we do observe anecdotally that these are correlated, if not causal. It’s intuitive that an LLM-based system that consistently cites the wrong sections of the contract and produces faulty reasoning will inevitably result in more incorrect pass or fail answers.

Results

Overall

On the test set described in this report, Screen’s production AI system (built on top of commercial LLMs) produced the following results:

- Accuracy: 97.50%

- Precision: 0.9857

- Recall: 0.9822

- F1: 0.9840

Without Guidance

The Screens platform allows users to add what we call AI Guidance to enhance the LLM’s performance when deciding if standards are met or not in a contract. This guidance is intended to mitigate Guidance Errors described in more detail in the Error Types section of this report. The guidance allows the drafter of the standard to get more explicit about how they expect the LLM to handle edge cases, exceptions, contradictions, etc.

If we remove this guidance and run the same production AI system on the same test set, we see the following results:

- Accuracy: 89.29%

- Precision: 0.9567

- Recall: 0.9039

- F1: 0.9296

Comparing results with and without guidance:

This provides a lift in accuracy of 8%. Or put differently, we see a 75% reduction in model errors by introducing well clarified AI Guidance. In broader terms, adding AI Guidance can be interpreted as more explicit prompting: telling the LLM how you want it to handle edge cases, understand your preferences, interpret your internal definitions of colloquial terminology, etc. instead of leaving the LLM to assume.

Some AI Guidance does nudge the LLM towards better reasoning in absolute terms regardless of the opinions and preferences of the drafter of the standard. Other AI Guidance is purely an expression of individual preferences. Regardless, adding and improving AI Guidance is the most effective path to improvement in performance that we observe – aside from choice of foundational LLM.

Without System Optimization

If we remove Screen’s proprietary retrieval optimization techniques and CoT prompting, we can get a sense for how an off-the-shelf open-source RAG system would perform. Here we keep the foundation of the production system’s meta-prompt, but we remove all of the proprietary retrieval enhancements and optimizations that Screens layers onto the process.

- Accuracy: 92.36%

- Precision: 0.9739

- Recall: 0.9272

- F1: 0.9500

Comparing results with and without Screens’s proprietary system enhancements:

We see a 5% lift in accuracy. Or put differently, we see a 65% reduction in model errors by layering on advanced retrieval and CoT prompting techniques. Most of the improvement comes in the form of better recall: a jump from 0.9272 to 0.9822. This matches intuition – with advanced retrieval techniques, we’re substantially less likely to miss relevant context in the contract which could result in false negatives.

Foundational LLM Comparison

We can also compare performance across foundational LLMs. Keeping everything else constant, we see a range of accuracy across popular commercial LLMs:

A few notable findings:

- GPT-4o and Claude 3.5 Sonnet lead the pack, with GPT-4o on top.

- The OpenAI GPT-4 family of models generally outperforms, with performance gradually increasing from the original gpt-4 model (June 2023), through the gpt-4-turbo models (January-April 2024) and ultimately peaking at gpt-4o (May 2024).

- The Anthropic Claude 3 family of models underperforms OpenAI’s flagship models. Additionally, successfully completing the tasks being evaluated in this report required some additional meta-prompt engineering to enforce JSON output for Claude 3 models.

- GPT-3.5 underperforms dramatically. This underscores the importance of using the leading generation of LLMs for contract review in the legal domain.

Sanity Check

We’ve also included results for a sanity check evaluation run with all contract specific data removed from LLM calls. In this run, the LLM is given no information about the contract and told to make the same pass or fail decision based on the standard. This may seem like an unnecessary exercise at first glance, but it helps us understand how well the system works in a trivial case. We’d expect it to perform very poorly.

As we’d hope:

- Accuracy: 46.67%

- Precision: 0.9409

- Recall: 0.3393

- F1: 0.4987

In this run, the LLM will respond with pass when it reasons that the standard should pass when the standard is not addressed in the contract. For example, consider this simple standard:

There must not be a non-compete clause.

In this case, the correct answer is pass if the contract is empty since there is no non-compete clause.

Now this standard:

The agreement explicitly states that it is assignable by either party in the event of a corporate reorganization.

In this case, the standard should fail if there is nothing in the contract since it doesn’t explicitly reference assignment. Even without any information specific to the contract, the LLM will still use some reasoning to get to an answer, and that answer can be correct.

If in the trivial case, your classifier biases towards negative predictions and false positives are particularly rare, your precision will be high even when the model isn’t doing much of interest. This is why the precision is so high, why it’s important to look at trivial baselines like this, and why it’s important to focus on more balanced accuracy measures like F1 score.

Error Types

When prompting LLMs to make binary predictions – a standard is either met, or not met, in a contract – there are a number of distinct failure modes that warrant individual discussion and understanding. While from an end user perspective, results may be adequately described as wrong or inaccurate, as practitioners we want to take a more resolute and descriptive approach. The first step to improving a system is to understand how exactly it falls short. To do this we differentiate error types as follows.

Retrieval Error

There are several techniques to employ to ask an LLM to determine if a standard is met in a contract. You can provide the LLM with an entire contract, a summary of the contract, or a subset of the text in the contract that you’ve deemed relevant to the standard. Regardless of the approach, there are failure modes where the LLM does not properly take into consideration the relevant sections of the contract.

Whether with one LLM call that has access to the entire contract or a more complex retrieval system, the LLM’s reasoning can omit context that is necessary to make the correct decision. In the legal domain, this failure mode is often due to cross referencing issues: a section that nominally contains the information required to make a decision might reference other parts of the contract or other documents incorporated by reference. This could be a defined term which is defined elsewhere in the contract, specific information about the parties outlined in the preamble, references to exceptions in other sections of the contract, or conflicting language between sections of the contract. Regardless, the LLM may come to the wrong decision; its reasoning leaves out this type of cross-referenced context.

This could be referred to more broadly as a hallucination. While the LLM isn’t making anything up out of thin air, it is omitting critical information that could result in a confident rationale and the wrong result.

Parsing Error

While the challenges of using LLMs as legal reasoning machines largely lie in the details of legal nuance, some of that challenge lives upstream in the process of converting raw documents into plain text. Even though multi-modal LLMs can treat contracts as images, it’s often more convenient and cost effective to convert various file types into plain text that a text only LLM can work with.

Familiar file types for contracts are Word Documents and PDFs. We also handle hosted web pages, as software vendors and other businesses often publicly host their standard click-through agreements (Terms of Service, MSAs, EULAs, Privacy Policies, Data Processing Agreements, etc.). It’s often an application-level requirement to parse these hosted documents directly from the web instead of requiring users to convert them into other document formats.

This presents potential information decay between what a human contract analyst sees when contracts are rendered via the appropriate application (PDF viewer, web browser, Microsoft Word, etc.) and what will be input to the LLM. There can be issues with OCR, document headers and footers can make their way into the middle of substantive paragraphs, images and tables can be hard to parse correctly, and more. In addition, many RAG systems require partitioning the contract into sections of text that are optimal when split logically using the intentional section and paragraph structure of the text. This is much easier with structured file formats like Word Documents and much harder for PDFs.

These issues can set LLMs up for failure before they even begin to reason about the legal content. Parsing errors compound with retrieval errors, resulting in scenarios where the LLM is not making decisions based on optimal information contained within the contract.

Reasoning Error

In the absence of Retrieval Errors and Parsing Errors, LLMs can still make reasoning mistakes even when given perfect context from the contract and a well written prompt. These are typically the more popular failure modes used by skeptics to critique LLMs and can be more striking to see when considering the use of LLMs for business-critical decision making. We see this take a number of forms:

- The LLM confuses the two parties of the agreement even when the context from the contract makes the party relationships and defined terms clear

- The contract uses a more colloquial definition of a defined term even when the prompt or the contract clearly defines how a capitalized term should be considered

- Complex decision trees of exceptions and carve outs aren’t followed as instructed in prompts

- Subjective decisions aren’t made in the direction of common sense

- The LLM takes the prompt too literally resulting in a bad decision in a way that a reasonable human practitioner wouldn’t

- The LLM takes the prompt too generally resulting in a bad decision in a way that a reasonable human practitioner wouldn’t

- When the standard in the prompt isn’t addressed in the contract, the LLM makes the wrong inference as to whether that standard should be met

Despite intentional prompting and meta-prompting, these reasoning errors happen. We see these less frequently with better LLMs, where those that perform better on conventional public evals are typically less subject to reasoning errors.

Guidance Error

Perhaps one of the more underrated failure modes of using LLMs to decide if standards are met in a contract is when a standard is not well-defined, contains contradictions, or assumes something but doesn’t make it explicit. We call these Guidance Errors. This is when the standard drafter has a specific exception, carve out, non-traditional definition, etc. in mind but doesn’t state it when defining the standard, and therefore the LLM has no knowledge of it. Other variations of this failure mode are catastrophic misspellings, contradictions, or overall lack of clarity when drafting standards.

This is best illustrated with an example. Consider the following standard:

The contract should limit the vendor's liability to 12 months’ fees.

This is a common liability cap. While any given individual contract reviewer may have a reasonable understanding of what they’d expect to pass or fail this standard, we see many edge cases in practice. If preferences for those edge cases are not clearly articulated, the LLM is left to assume and make a judgment call. Consider these scenarios:

- What if there are major exceptions to this liability cap that weaken it so substantially that it might as well not exist?

- What if this liability cap doesn’t apply to beta features? Or some specific set of features? What if the service is free?

- What if the liability is capped at either 12 months’ fees or some fixed amount, whichever is greater? Or whichever is lesser? What if the fixed amount is very large? Or trivially small?

- What if there is a secondary cap or a backup cap that applies under certain conditions at a much different amount than 12 months’ fees?

- What if the cap is only for fees paid for the services that gave rise to the liability rather than fees paid in general under the agreement?

In any of these cases, the LLM is left to assume. The leading LLMs are capable of reasoning through this type of nuance, but if they are told they must make a decision as to whether or not the standard passes, they might not make the same decision that you would if you don’t expose your preferences. If the standard drafter has answers to these questions in their head, embedded in institutional knowledge, or in a playbook that the LLM doesn’t have access to, there will be room for error. This error isn’t due to faulty reasoning, rather a lack of shared context. This is like the kind of misunderstandings that highly intelligent humans might have, not due to lack of intellectual horsepower, but due to miscommunication.

We see a distribution of these error types in the results of this analysis. Our analysis of error types is largely a qualitative exercise. We’ve shared explanations of them to establish a shared understanding about what can go wrong when analyzing contracts with LLMs.

Reproducing the Analysis

This analysis can be loosely replicated using the same community screens that we used. These are publicly available. We’d expect to see similar results when screening contracts of the appropriate contract type with these screens. The community screens can be found here:

- 8 Deal Breakers in Tech Contracts

- Non-Disclosure Agreement Red Flags

- Negotiating Key Non-Solicitation Provisions in Vendor MSAs

- Customer Contract Checklist for M&A Due Diligence

- How to Contract: Low Risk Non-Disclosure Agreements

- Contracting Under GDPR: The Article 28 Guide

- Crucial DPA Compliance Checks: A Must-Read Guide

Any given contract may have unique issues that represent too small of a sample size to yield similar results. Reviewing 5-10 contracts with multiple screens in the list above may be necessary to produce a large enough sample size to replicate the results outlined in this report.

Acknowledgements

Thanks to the entire Screens team for contributing to this analysis. In particular, Max Buccelli and Milada Kostalkova for data labeling and tuning guidance, as well as the screens community for providing us with publicly available and auditable screens for use in this analysis.